BSR, the Less-Than-Golden Ratio

Imagine it’s 1963, Roy Orbison is crooning on the radio, and you’re staring at a broken drill collar box. There’s no real mystery—it’s a fatigue failure, and if you’re drilling you’re doing fatigue damage. But for this collar size, it seems like it’s always the box that twists off; for other sizes, it’s always the pin. And that realization has gotten the wheels turning …

If one side always fails, then that’s the weak side, right? And if we strengthen the weak side, we’ll improve the overall life, right?

This is not purely theoretical; this is (the inspired-by-true-events version of) what Doyle Brennager pondered in the era of horn-rimmed glasses (no, not … the first one … you know). He reached the conclusion that, if he could balance the stiffness of the pin and the box, then he would be maximizing the fatigue life of the connection.

Stiffness, let’s see … that would be best described by the section modulus (we use Z as the label for that, you can use whatever your little heart desires), which is essentially the resistance to bending that a particular cross section would have. But that’s only a 2D calculation; you can’t find the section modulus of the whole pin or the whole box. We need to pick a specific cross section on both sides. It seems reasonable to pick the cross sections that fail—the last engaged threads:

Ok, so we characterize the stiffness of the pin and the box with the section modulus of each one’s likely-to-fail cross section. To see if they’re “balanced,” we just create a ratio.

If it were actually balanced, that BSR should be 1.0. Good ol’ Doyle knew better than to assume that, what with all the changing geometries and loadings going on. So he got some data. Specifically, he looked through inspection reports for drill collars that had cracks in them. He found, first, that collars with a high BSR were more likely to crack in the pin than the box, and collars with a low BSR were more likely to crack in the box than the pin. That works as confirmation that he’s on the right track; BSR, at least in the extremes, tells us something about the “balance” of the connection.

Then he looks for the crossover point, where the pin and the box are equally likely to fail. He finds the magical point was (… drum roll please …) 2.5!

Thus, make sure all your connections have a BSR of 2.5 to maximize their fatigue life. Thank you for your time.

Cough.

Still here, huh?

You may have noticed from the location of the scroll bar in your browser window that I might have a little more to say about it, if you’re willing to stick with me. Ready? OK!

First, Doyle’s idea was a good one; in the 1960s our industry was using all sorts of stupid connections, and the BSR range (generally 2.25 to 2.75 was considered best) allowed us a good, solid, technically-based reason to throw those odd ducks in the trash. So the whole world wound up using BSR to decide whether collar connections were good or bad; API standards even got in on the act. That’s an important step because it means new collars are simply not made with non-acceptable connections. By the time the 1980s roll around, everybody is using BSR to design their drill collar connections for the longest fatigue life possible.

Or so we think.

T H Hill was doing a lot of drill pipe inspections in the late 80s, and with Richie Sambora wailing out our sound track, we had one of those wheel-turning moments, too. See, we’d noticed that small collars tend to break in the pin, and large collars tend to break in the box. At this point, hair bands and BSR inspections were both in full swing, so most connections did, in fact, fall within the desirable BSR range. If that range was doing its job, the connections should be “balanced,” and the pin and the box should be equally likely to fail.

But they ain’t.

Working within the BSR paradigm, then, we figured that small, medium, and large collars needed different ranges. Small collars need a lower range to strengthen their pins; large collars need a higher range to strengthen their boxes. Like so:

That got written into the second edition of DS-1, and (after much time and argument) was also written into the newer versions of API’s standards (7-2 for threading and RP7G-2 for inspection).

Now, you see, we have an adjusted range for the BSR that will “balance” our drill collar connections, giving our industry the very best chance of not breaking those poor threads.

So … use DS-1 for your BSR ranges.

Ahem. Thank you for your time. Still not done, huh? It’s a long-winded writer we have here, I tell you what.

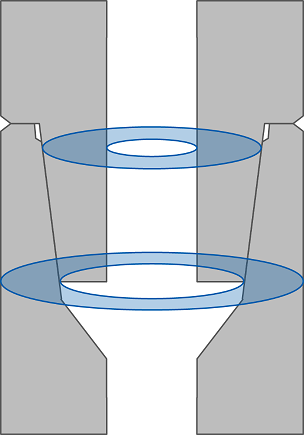

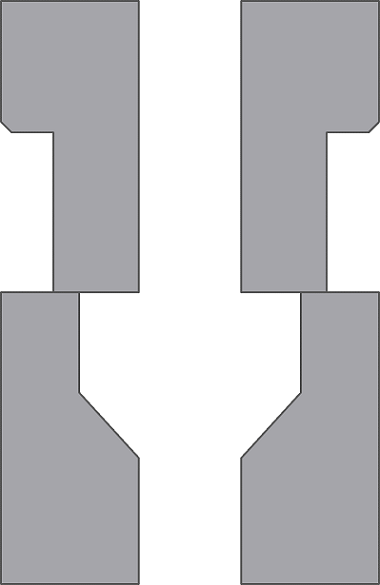

Pretty early on, we were skeptical of BSR just generally. I mean, honestly, our connections don’t look like this:

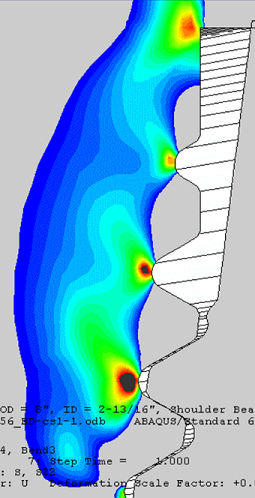

There are multiple cross sections, multiple different gradients of loading, threads (which BSR entirely ignores, for Pete’s sake!)—you can’t just describe a connection by two stinkin’ cross sections. Without looking too hard you can find cases where BSR says one thing, and reality just doesn’t agree. So we started using Finite Element Analysis (you know, where a computer cuts your widget into 1.73 bajillion pieces to figure out what the stresses are) to describe the fatigue performance of our connections. The early results were important, even if they should have been obvious: larger-radius thread forms (like the NC connection thread form) give better fatigue lives … often by a power of 10 or more.

With 3 Doors Down playing on our iPods, we went through that modeling process over and over again, building a database of connection performance numbers. We envisioned this as a replacement to BSR, a way to decide which connections have the longest life based on accurate models. We called it Connection Fatigue Index (CFI, because we love our TLAs—Three Letter Acronyms, that is), and we published it in the 4th edition of DS-1, fully expecting the industry to chuck BSR and start using CFI exclusively.

And now we’re riding unicorns to go watch our favorite Quidditch team play.

Ok, so maybe we were a little optimistic about getting rid of BSR, but the plan is still valid. In fact, I worked hard to fix one of the difficulties in using CFI, normalizing away the exact curvature you apply. (In 4th edition you had to choose 1-, 3-, or 6°/100' of curvature to apply to your drill collars in order to read a CFI number out of the table. In 5th edition we normalized it so that all the nCFI numbers compare apples-to-apples with each other. It’s better.) All our modeling leads to a pile of interesting conclusions about what actually makes a difference in the fatigue life of your connection, like:

- Rounder thread roots are better. That should be obvious, but it flies in the face of the old BSR ranges which told us to use 6-5/8 Reg connections instead of NC56 connections on 8" drill collars. NC is always better than Reg for fatigue, full stop.

- The pin and the box stresses are fundamentally different. On the pin side, makeup torque causes the point stresses to go past yielding. By itself that’s significant, but it means that other things you might do (lower the makeup torque, lower the overall bending) often don’t have much of an effect on the fatigue life of that pin because the critical material is already plastic.

- On the box side, makeup stress does not cause yielding. That means that the box life is very dependent on the makeup torque you apply. The box back is also more exposed to corrosion, which will speed up fatigue damage. These things together help explain why large collars crack in the box instead of the pin. It’s less about “balance” and much more about more corrosion exposure and higher applied torques on those big collars.

Right now, then, you can train yourself to not use BSR values as the best way to determine which connection is best. Familiarize yourself with the nCFI tables in DS-1 Volume 2, and make a point of referring to them when you have that choice to make. It will improve your results, I promise.

…

Ok, look, I’m warning you: if you keep reading now, you’re going to get a strong earful of Grant-flavored opinion. I tried to get this into DS-1, and I got shot down, so there are many reasonable people out there that I just couldn’t convince, and there’s a better-than-average chance that’s because I’m nuttier than squirrel poo.

You have been warned.

BSR has always been fundamentally an inspection criteria: the OD has to be the correct size, and the ID has to be the correct size. Implicitly, we assume that if the OD is too small, or the ID is too big, then the performance will begin to degrade.

And you know what happens when you assume.

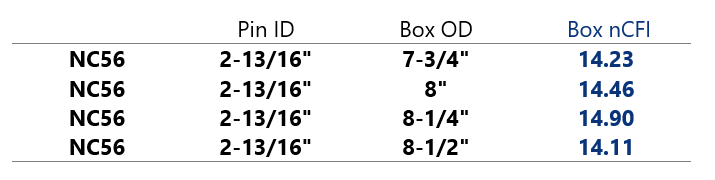

In doing all that research to figure out how to normalize the CFI values, I went digging through every single model we had available. I moved things around and around, trying to see the problem and the trends from different angles, and this one slapped me in the face. Let’s choose one connection type (say, NC46 or 6-5/8 Reg) with a single pin ID. Then let’s see what happens when we adjust the box OD that’s associated with it. For example:

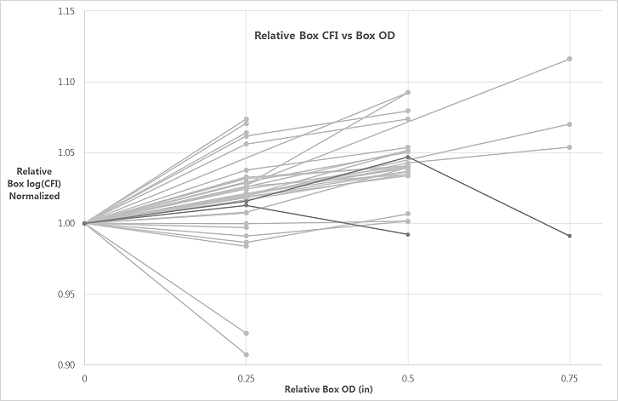

Ponder that table for a moment. Going down, the only thing that changes is the box OD, and it’s getting bigger. Presumably that means the box is getting stronger. But the nCFI value (that is, the fatigue life as best we can tell) is not getting longer—the biggest box is weaker (in fatigue) than the smallest box. This is not an anomaly. If I plot all the models I have in a similar way, normalized so I can put them all together, I get:

As the box gets bigger, we would want the fatigue life to go up. But … sometimes it goes up, sometimes it goes down, sometimes it stumbles around like a drunken mountain goat. If you want to make the box stronger in fatigue, I don’t know whether you should make the OD bigger or smaller. It depends. If you want a stronger pin in fatigue, ditto.

So here’s the conclusion: I can’t make an “acceptable range” of OD or ID sizes for drill collar connections, because I don’t know which direction is better or worse. Trying to inspect BHA connections with a fatigue-performance basis is futile.

That’s a pretty bold conclusion, but I can’t see another from under this tin-foil hat. Instead, I think we go back to manufacturing and make sure we’re making the sizes that make the most sense, then trust that design work over the life of the connection. Inspection criteria should be based on something else (makeup torque, maybe?), not fatigue performance.

I did warn you.